GCP page

This guide walks through setting up dedicated GPU resources in your GCP account and how to use those GPUs for your workloads that need the GPU’s power. In GCP, you select the machine type and then attach GPU accelerators to it in the same node pool. (even GPUs are replicated across the multiple zones in your region)

Different GPUs are available based on the machine type you choose for your cluster. The common ones are the A2 series, G2 series, and N1 series. You should ensure that your project has enough quota for the machine series that corresponds to your selected GPU type and quantity.

For advanced use cases, there are some constraints and limitations; you can refer to the GKE documentation for more details.

Pre-requisites

- Argonaut account

- GCP account and VCS connected

- Apps on your Git that can take advantage of the GPU

Provisioning Cluster

- From the sidebar, go to

Environmentsand clickEnvironment +to provision a new environment in your chosen region.💡 In the case of GCP, GPUs are only available in selected regions and zones.

- Once the environment is created, click on the

Infratab and clickResource +. - Choose GKE (Kubernetes cluster).

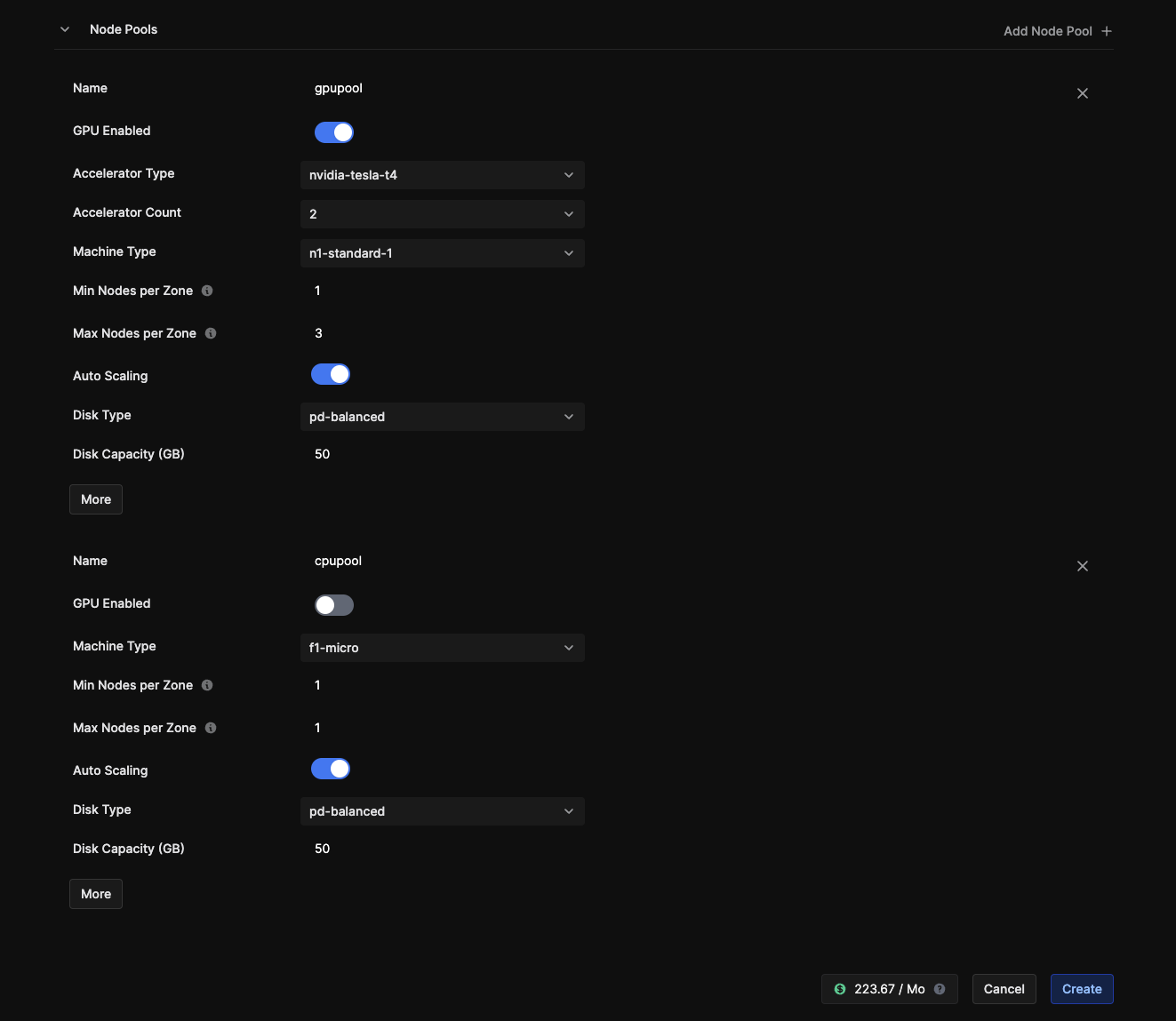

- Have separate Node pools with CPU-only resources and a separate one where you also support GPU accelerators. (If you only need GPU-enabled nodes, just create one node pool with GPU enabled). This helps save on costs when you have multiple workloads running and not all need GPU resources.

- Choose a Compute instance that supports GPU in your GPU Node pool, and then toggle Enable GPU.

Here,

gpupoolis the node pool with GPU enabled, andcpupoolis a node pool with only CPU resources.

- Click

Create, and your node pools will be deployed in your cluster.

Now that your cluster is ready, it’s time to deploy your application and enable the nodes that can make use of the GPU resources.

Deploying an app

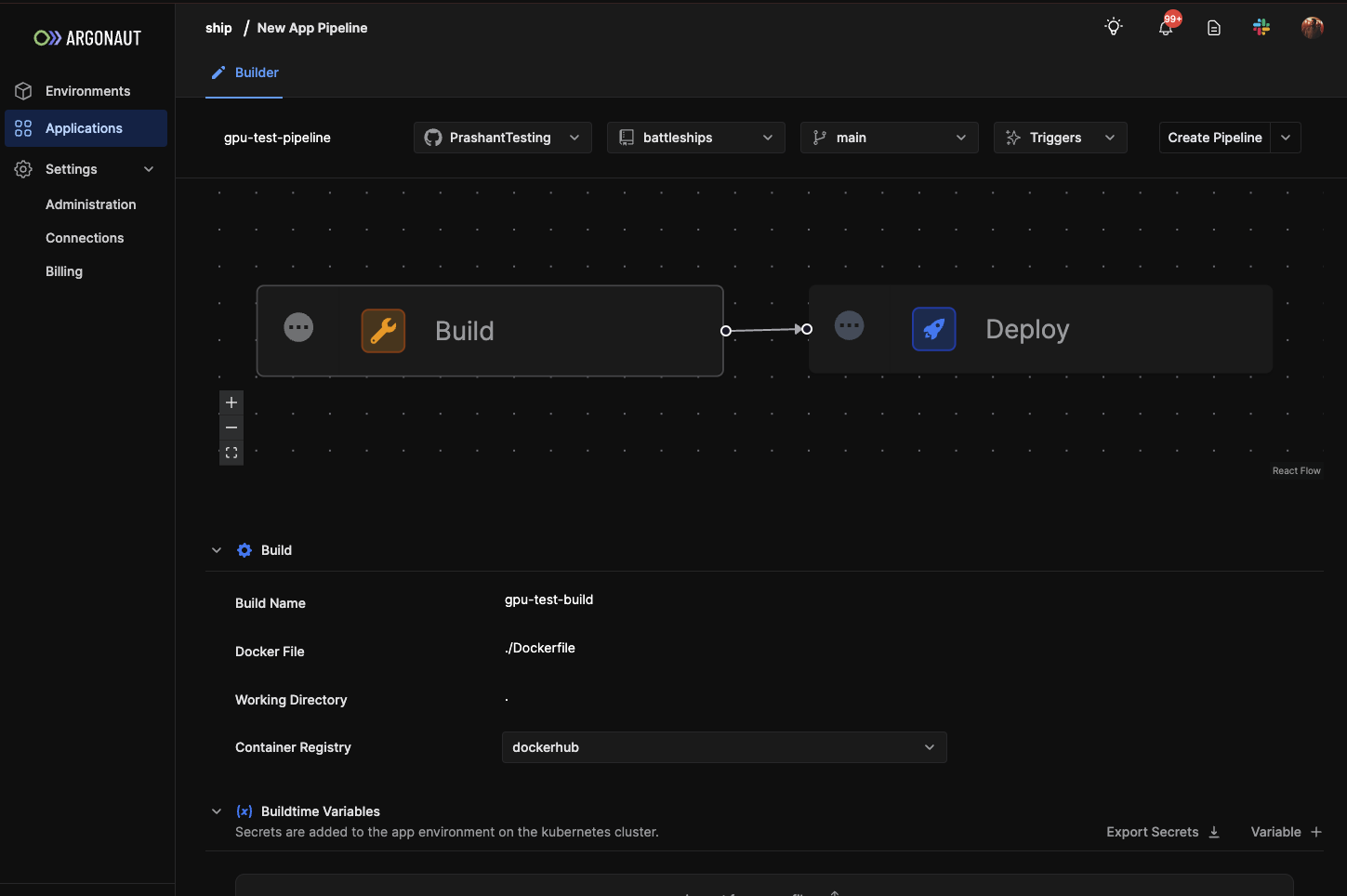

While deploying your app, you have two steps: build and deploy. Your git provider handles the build step through GitLab CI or GitHub Actions. The deploy step is handled by ArgoCD.

- Go to the applications tab on the left.

- Click on

Application +. You can now set up multiple pipelines for your application. - Create

Pipeline +to build a new pipeline. - Enter the build step, choose your repo, and configure the build details.

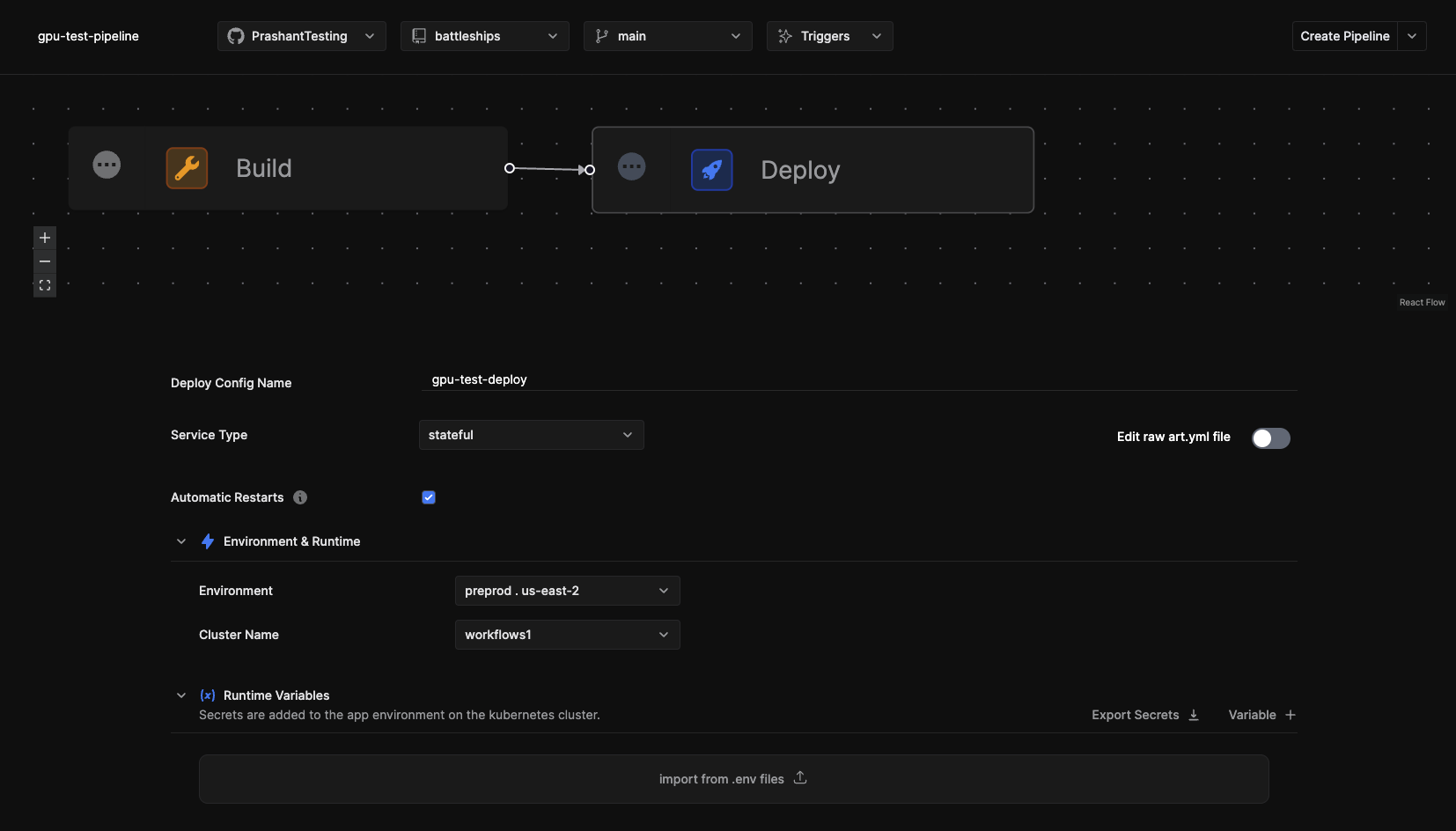

- Select the Deploy step. Choose which environment and cluster it gets deployed to.

- Add any runtime variables, secret files, etc.

- You can then update network services, autoscaling, and storage.

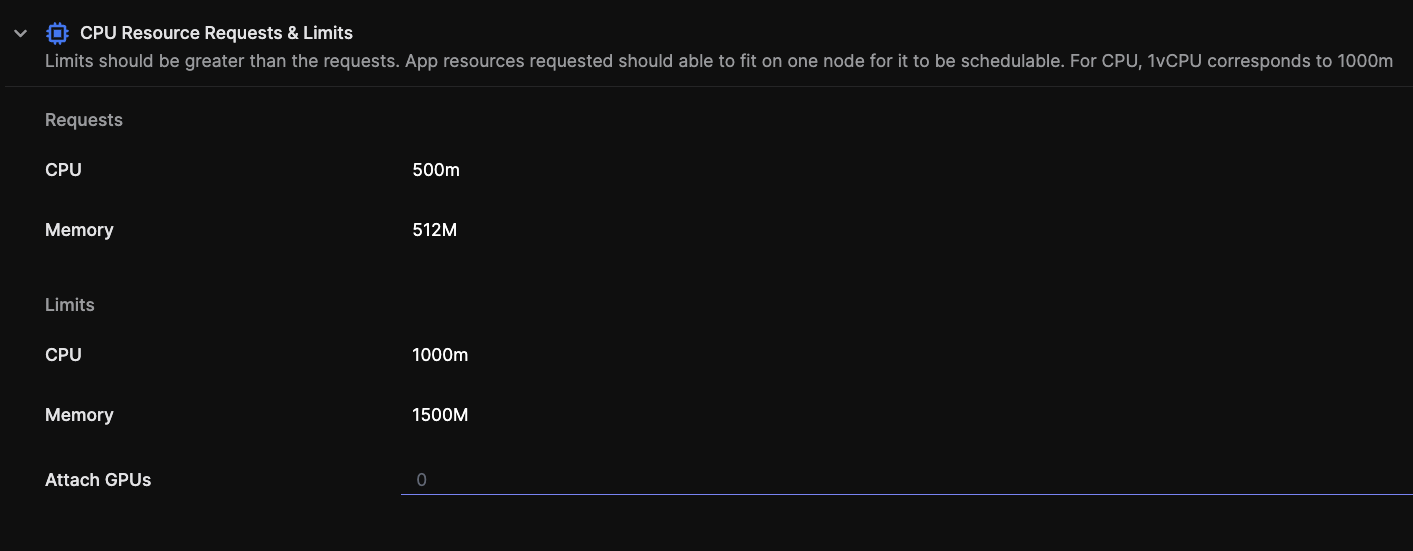

- As a last step, you set the CPU and Memory requests, limits, and the number of GPUs to attach.

💡 Note: Attach GPUs number <= Number of available GPUs in your instance (refer to GKE resource in your environment to see how many GPUs you have provisioned)

- Click on Create Pipeline, and your app is deployed.